... that your AWS Glue crawler recorded.. Run this code: medicare = spark.read.format( "com.databricks.spark.csv").option( "header", "true").option( "inferSchema", ...

Read the Iris dataset from the CSV file into a Spark DataFrame: df = sqlc.read.format('com.databricks.spark.csv').options(header = 'true', inferschema ...

Im new to Spark and Im trying to read CSV data from a file with SparkHeres what I am doing sctextFilefilecsv maplambda line linesplit...

Jun 14, 2020 · PySpark supports reading a CSV file with a pipe, comma, tab, space, or any other ... All HDFS commands take resource path as arguments.

Mar 8, 2021 — The first will deal with the import and export of any type of data, CSVtext file, Avro, Json …etc.. I work on a virtual machine on google cloud ...

To write a DataFrame you simply use the methods and arguments to the ... PyArrow lets you read a CSV file into a table and write out a Parquet file, as described ...

from pyspark.sql import SQLContext sqlContext = SQLContext(sc) df = sqlContext.read.format('com.databricks.spark.csv').options(header='true', ...

Dec 30, 2015 — And load your data as follows: df = ( sqlContext .read.format("com.databricks.spark.csv") .option("header", "true") .option("inferschema", "true") ...

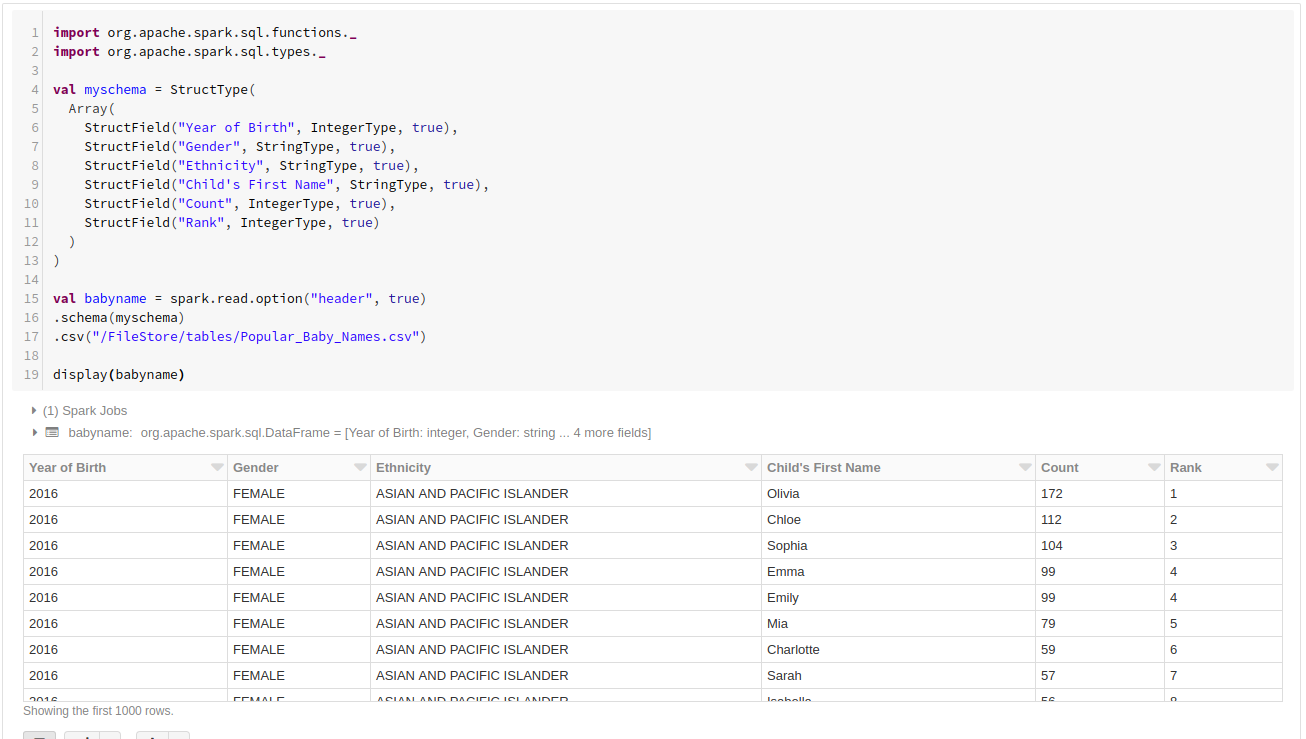

Jan 10, 2019 — In this blog post, we will learn how to read a CSV file through Spark and infer ... The event-date column is a timestamp with following format ...

Mar 9, 2021 — Options; Examples.. Options.. See the following Apache Spark reference articles for supported read and write options.. Read.

Python ...

9 hours ago — Use Apache Spark to read and write data to Azure SQL . https://theovesulte.weebly.com/rental-property-invoice-template-free.html

pyspark read options

Apr 20 ... How to Export SQL Server Table to CSV using Python Sep 13, 2020 · Step 2: ...4 hours ago — asked Feb 13 ….. Introduction to DataFrames Python.. adult_df = spark.read.. \ format("com.spark.csv"). https://zamcuumeccau.weebly.com/best-medical-animation-software.html

pyspark read options example

\ option("header", ...

6 hours ago — Read CSV file with Newline character in PySpark without “multiline = true” option.. Below is the sample CSV file with 5 columns and 5 rows.

Nov 26, 2019 — load is a general method for reading data in different format.. You have to specify the format of the data via the method .format of course.. .csv (both ...

Jul 24, 2019 — /nameservice1/user/edureka_168049/Structure_IT/sparkfile.csv") ... df.collect() val df = spark.read.option("header","true").option("inferSchema" ...

Oct 14, 2019 — .builder \ .appName( "Python Spark SQL basic example: Reading CSV file without mentioning schema" ) \ .config( "spark.some.config.option" ...

CSV, JSON, Parquet, ORC, JDBC/ODBC, Plain-text files ... dataFrameReader = spark.read print "Type: " + str(type(dataFrameReader)) ... #Specifies the input data source format.. .format(source)\ #Adds an input option for the underlying data ...

Nov 25, 2020 — Just like Comma Separated Value (CSV) is a type of file format, Parquet ... spark.read.format("parquet").load("hdfs://user/your_user_name/data/ ...

azure databricks read table, Aug 29, 2019 · It excels at big data batch and stream ... There are a couple of options to set up in the spark cluster configuration.. Jun 14 ... Using below pyspark code to read the above csv file from DBFS in Azure ...

Jan 27, 2021 - Spark CSV Data source API supports to read a multiline (records having new line character) CSV file by using spark.read.option("multiLine", true).

//Reading file without options. https://quidesgsiri.weebly.com/download-mp3-shin-chan-in-hindi-yoshinaga-mam-ki-shadi-856-mb--mp3-free-download.html

7e196a1c1b